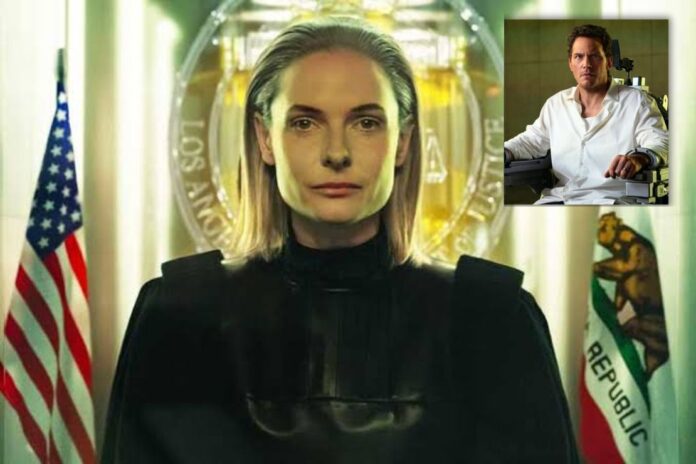

AI Judges Promise Efficiency, but Mercy Highlights the Risks of Bias, Lack of Empathy, and Flawed Data in the Justice System

The movie Mercy, available on Amazon Prime Video, explores a future where artificial intelligence replaces human judges in the courtroom. Instead of a person listening to testimony and making a final decision, a powerful AI system analyzes evidence and determines whether someone is guilty or innocent. Watching this movie raises an important question about the future of justice: should artificial intelligence have the power to decide someone’s fate?

Can AI Judges Solve the Justice System?

In the film, the justice system believes that AI judges will solve many problems in the courts. Human judges can sometimes be biased or influenced by emotions, stress, or personal beliefs. Artificial intelligence, however, is designed to examine facts and data without emotion. Supporters in the movie believe that an AI judge would make the legal system more efficient and consistent. It can process large amounts of information in seconds, review evidence quickly, and avoid the human errors that sometimes affect trials.

Justice Requires Human Feelings

Even though this idea sounds beneficial at first, I personally do not think an AI judge should replace human judges. Justice is not just about rules and data. It also requires understanding people, their experiences, and the circumstances surrounding a crime. Human judges can look at a person’s background, listen to their story, and recognize emotions such as regret or remorse. An artificial intelligence system cannot truly understand those things because it only processes information rather than human feelings.

ICYMI: Cory Booker Eyes 2028 Presidential Run

No Mercy in AI Judges

Another important part of justice is mercy. Mercy allows a judge to show compassion and give someone a second chance when the situation calls for it. For example, a judge might give a lighter sentence to someone who made a mistake but shows genuine effort to change their life. A machine cannot feel compassion or empathy, which means it may only follow strict rules or patterns in data. This could lead to decisions that are technically correct but morally unfair.

There is also the issue of the data that trains artificial intelligence systems. AI learns from past information and historical records. If past court decisions included bias or unfair treatment toward certain groups, the AI could repeat those same patterns. Instead of fixing problems in the justice system, the technology might simply automate them. This creates a risk that people could be judged by flawed data rather than by a fair and thoughtful human decision.

Mercy is showing on Amazon Prime.

Read Next: 110+ Authors Headline Free San Antonio Book Festival April 11